Aircraft displays are the pilot's window on the world of forces, commands, and information that cannot be seen as naturally occurring visual events or objects. It explores the relationship between emerging technologies and information overload in the cockpit. This chapter provides an overview of aviation displays and automation. Fuel penalty associated with prolonged decision time remains the largest detriment to human landing point redesignation task performance.

In these same conditions, the human pilot was also observed to perform better than a reference automated system. Human performance was found to be best when the importance of fuel consumption was weighted less than system safety or proximity to points of interest. The number of identifiable terrain markers, landing points of interest, and the pilot's expectation of terrain features had effect sizes too small to be detected in this experiment. The pilots completed the task in an average of 20 s.

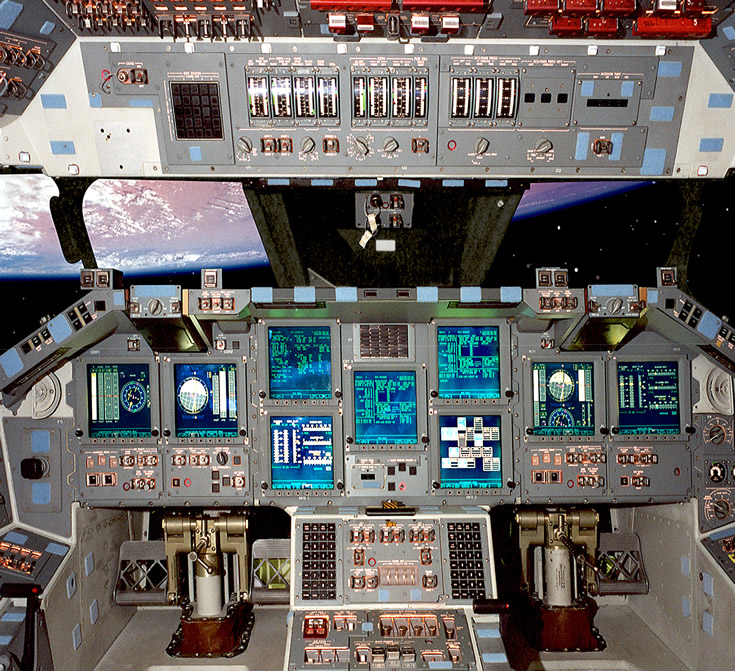

Additionally, this paper presents the analysis of a comparison between a reference automated system and the pilots' site selection performance. This paper presents the results of a study conducted to quantify pilot performance and task completion time during landing point redesignation the effect of environmental and mission parameters on those measures and a design space exploration using human performance contours for different definitions of performance. During this task, the crew interacts with an on-board automated flight system to finalize the touchdown point. The requirements for the next generation of lunar landers include being capable of landing in terrain that is both poorly lit and hazardous, increasing the importance and difficulty of an already critical mission task known as landing point redesignation. We present experimental results that show a high correlation between the system's predicted human performance and results obtained from physical simulations of fault detection, isolation, and recovery operations in ground-based simulations of shuttle ascents. We describe this integration and the significant utility of the application it enables. The Apex reactive controller provides the rich set of features required. The system's procedural specification language is not sufficiently powerful to model the complexities of spaceflight rules. MIDAS provides a comprehensive toolset for describing crewstation designs running simulated missions to determine an astronaut's expected performance using it. In this paper, we describe an ongoing program to develop a more automated evaluation approach via the integration of the Apex reactive planning system from the Artificial Intelligence community and a human performance modeling tool, the Man-Machine Integration Design and Analysis (MIDAS) simulation engine from the Human Factors community. This approach is too expensive and resource-intensive to permit more than a small number of design options to be evaluated. This level of optimization is not guaranteed by the traditional approach to cockpit user interface display design and evaluation, which relies on building crewstation mockups and monitoring crew performance in high-fidelity simulations of crew-vehicle operations.

To achieve a more autonomous concept of vehicle operations, the interfaces will have to ensure an optimal level of crew- vehicle interactions and minimize the possibility of crew error. We discuss several aspects of the design of such a system, including human-machine functional allocations user interfaces to enable and support human-machine interaction, and methodologies for testing and evaluating collaborative operational concepts and associated user interfaces.Ĭompared to today's missions, NASA's next generation of exploration mission will require new cockpit user interfaces and display formats that enable astronauts to operate their vehicles with less real-time assistance from the ground. On next-generation spacecraft, these technologies could be harnessed to replace the traditional Caution and Warning system with a decision and action support system that assists the crew with all aspects of real-time health management operations. Today's health management technologies have much more extensive capabilities that range from detecting off-nominal trends and data patterns to executing fault isolation and recovery procedures. Most notably, the Caution and Warning system does little more than generate auditory alerts and fault messages in response to out-of-limit sensor readings. A shuttle crew's ability to manage the health of the spacecraft systems is compromised by the limited capabilities of the onboard health management technologies, many of which date from the 1970s and 1980s.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed